|

| How Big Tech's $630 bln AI splurge will fall short |

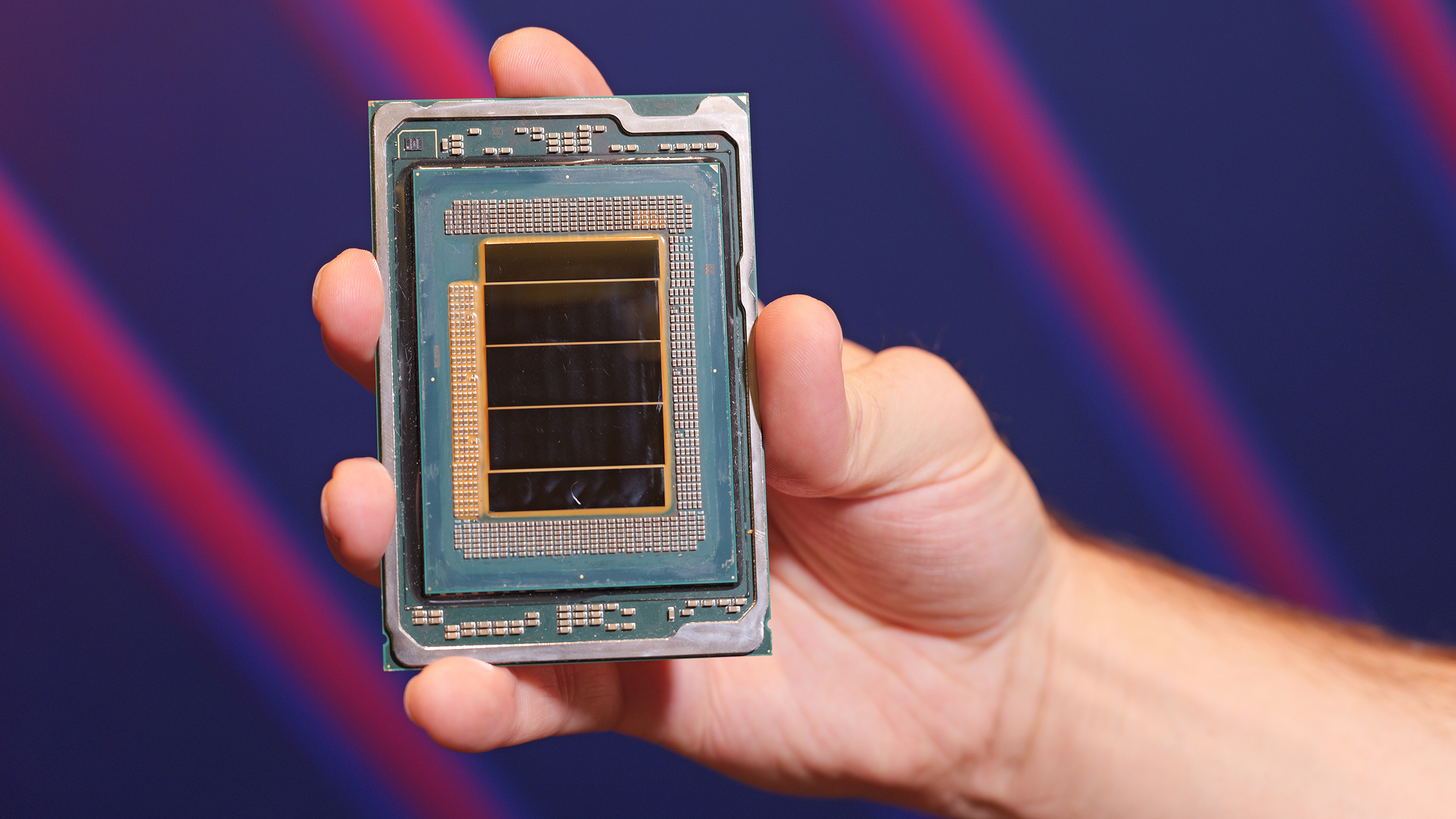

Data center operators and enterprise IT buyers are still grappling with one of the most unexpected bottlenecks in the AI boom: a severe shortage of traditional server CPUs. What began as a GPU-dominated crunch has now spilled over into x86 processors from Intel and AMD, with lead times stretching to six months in some markets and prices climbing 10-15% — and potentially higher in the coming quarter.

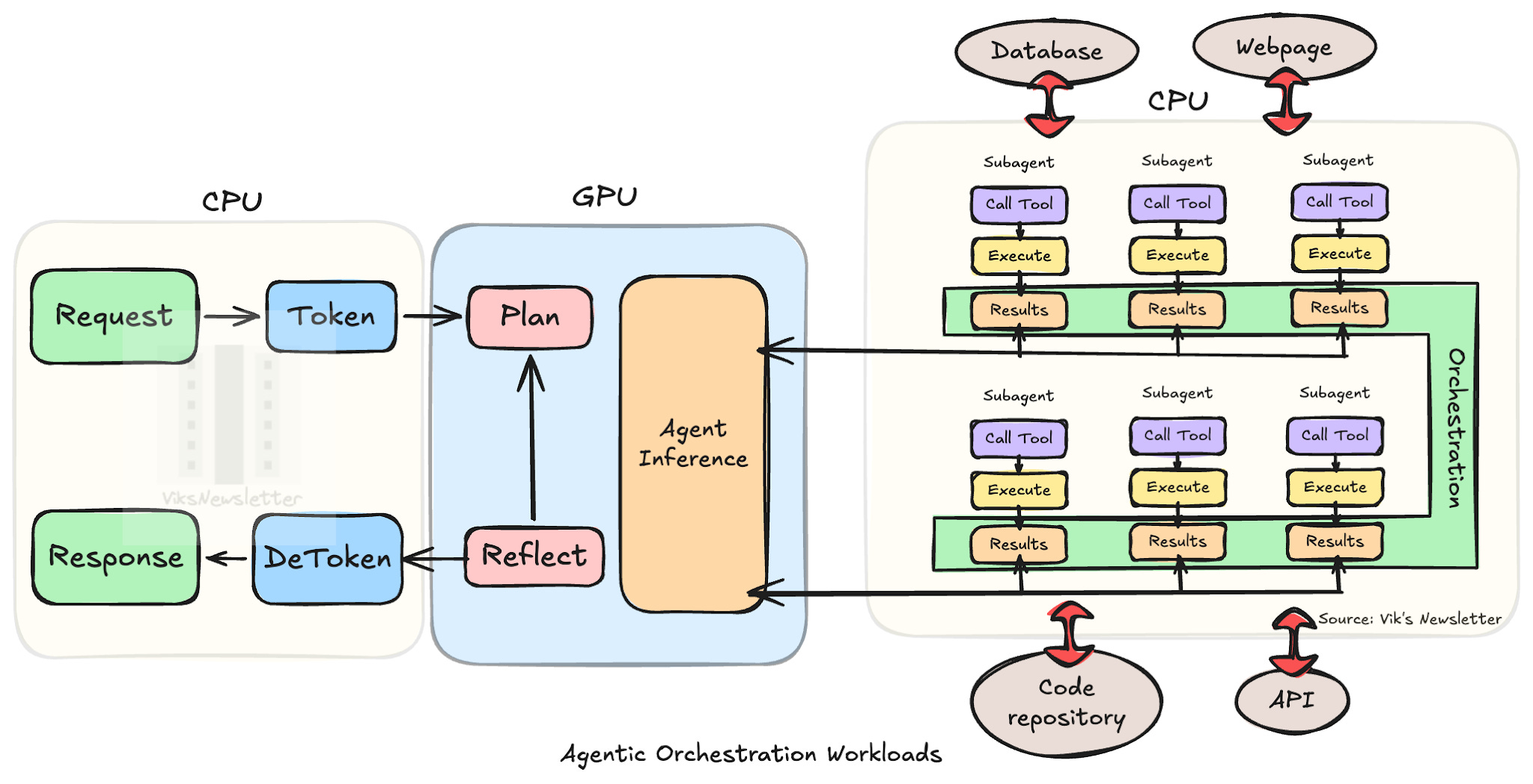

As of early April 2026, the situation remains tight. Intel has warned customers, particularly in China, of extended delays for Xeon server chips, while AMD has seen lead times push to 8-10 weeks. Both companies are diverting capacity toward high-priority AI data center builds, leaving PC makers and smaller enterprises scrambling. The root cause? A surprising shift toward “agentic AI” workloads — autonomous AI agents that require far more general-purpose CPU horsepower for orchestration, inference coordination, and real-time decision-making than early large language model training ever did.

Intel and AMD Respond with Price Hikes and Capacity Shifts

|

| Intel's make-or-break 18A process node debuts for data center with 288-core Xeon 6+ CPU — multi-chip monster sports 12 channels of DDR5-8000, Foveros Direct 3D packaging tech |

Intel confirmed recent server CPU price increases of roughly 10-15% for OEM customers, with some reports pointing to a cumulative 30% hike across multiple rounds in 2026. The company is ramping internal production and increasing 2026 capex guidance after an unexpected late-2025 demand surge depleted inventories. AMD CEO Lisa Su noted that CPU demand “far exceeded expectations,” driven by inference and agentic workloads, and the company is forecasting strong double-digit server CPU market growth this year.

Analysts at KeyBanc and Omdia expect the crunch to peak in Q2 2026 before any meaningful relief. Intel’s earliest yield improvements on new capacity could start easing constraints by late Q2, but full normalization may not arrive until late 2026 or even 2027 for some SKUs. In the meantime, hyperscalers and large cloud providers are locking in long-term agreements (LTAs) to secure allocation, leaving smaller buyers with shorter quote windows and narrower configuration options.

DRAM and HBM4: The Memory Side of the Crunch

|

| Micron Begins Volume Production of HBM4, PCIe Gen6 SSD and 192GB SOCAMM2 for NVIDIA Vera Rubin - |

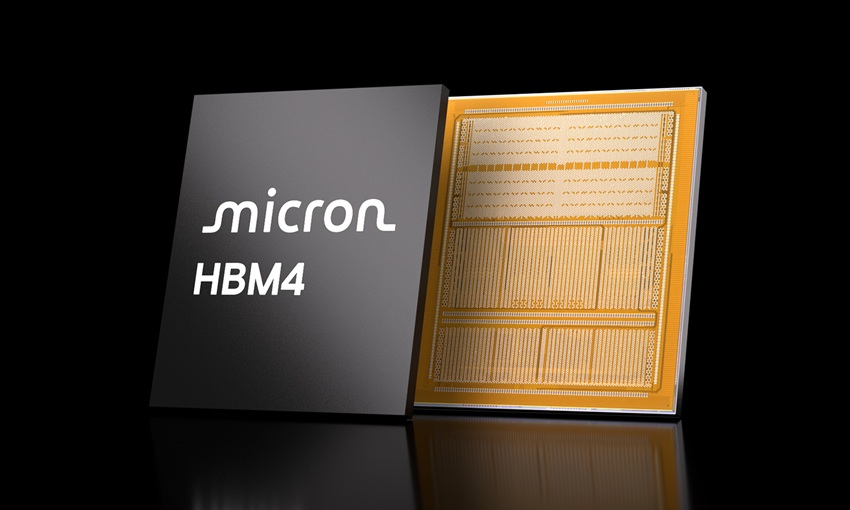

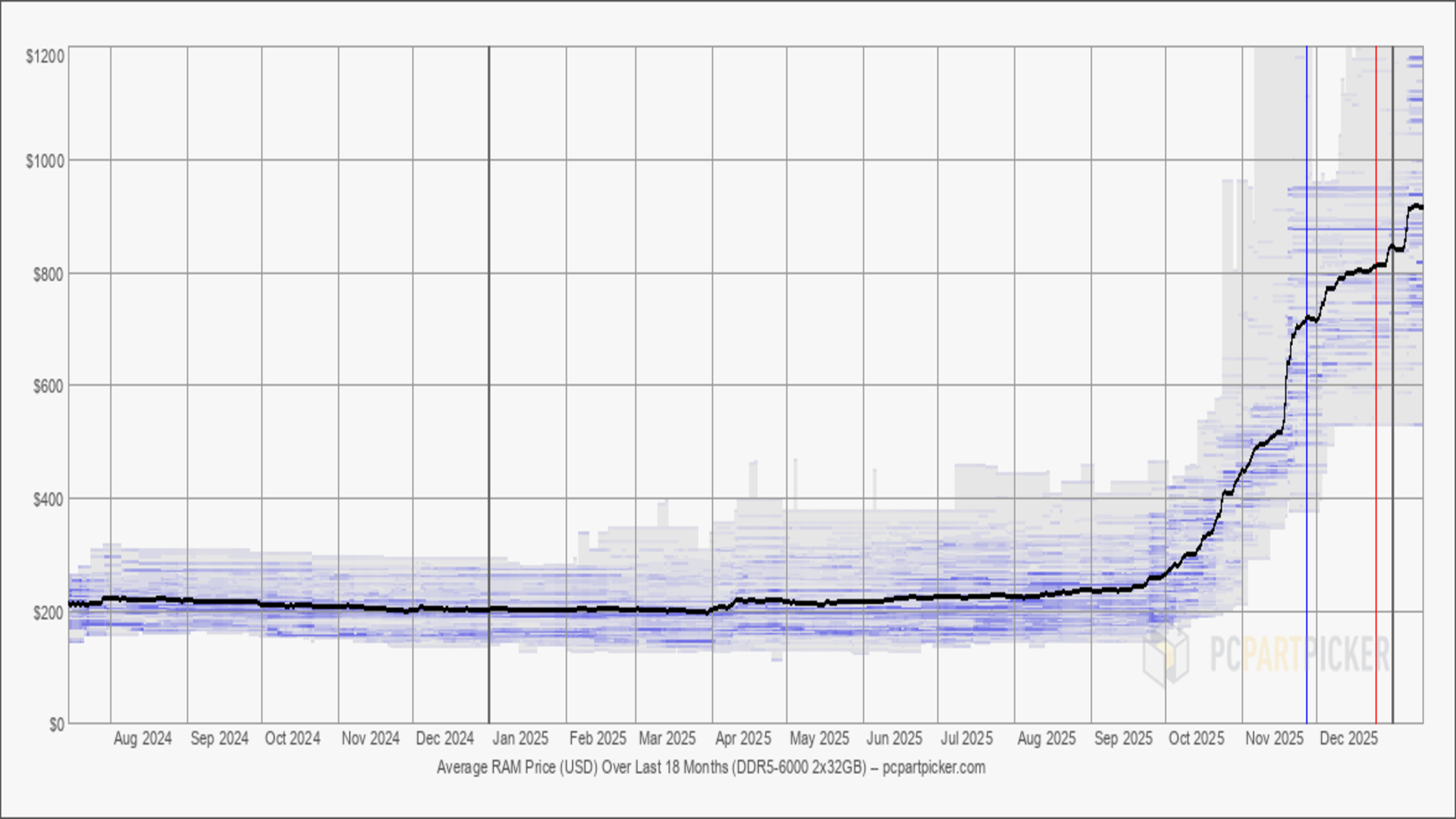

The CPU shortage is compounded by a parallel memory supercycle. Conventional DRAM contract prices are projected to rise another 58-63% quarter-over-quarter in Q2 2026, with some server-grade modules already up 80-90% since late 2025. AI servers consume 10-20x more memory than traditional workloads, and suppliers have shifted wafer capacity toward high-margin HBM, tightening supply for standard DDR5 RDIMMs.

On the high-bandwidth side, Micron just announced high-volume production of its HBM4 36GB 12-high stacks in March 2026. These chips deliver more than 2.8 TB/s bandwidth with 20% better power efficiency than HBM3E and are explicitly designed for NVIDIA’s upcoming Vera Rubin GPU platform. However, industry reports indicate SK hynix and Samsung are expected to capture the bulk of premium Vera Rubin HBM4 allocation (roughly 70/30 split), with Micron positioned more strongly for inference-focused or mid-tier systems and its new PCIe Gen6 SSDs and SOCAMM2 modules.

Enterprise buyers are now being advised to lock in memory LTAs immediately to avoid further volatility, as spot pricing can shift hourly in some channels.

|

| High-capacity DRAM costs go through the roof as server demand from AI giants tightens PC memory availability dramatically |

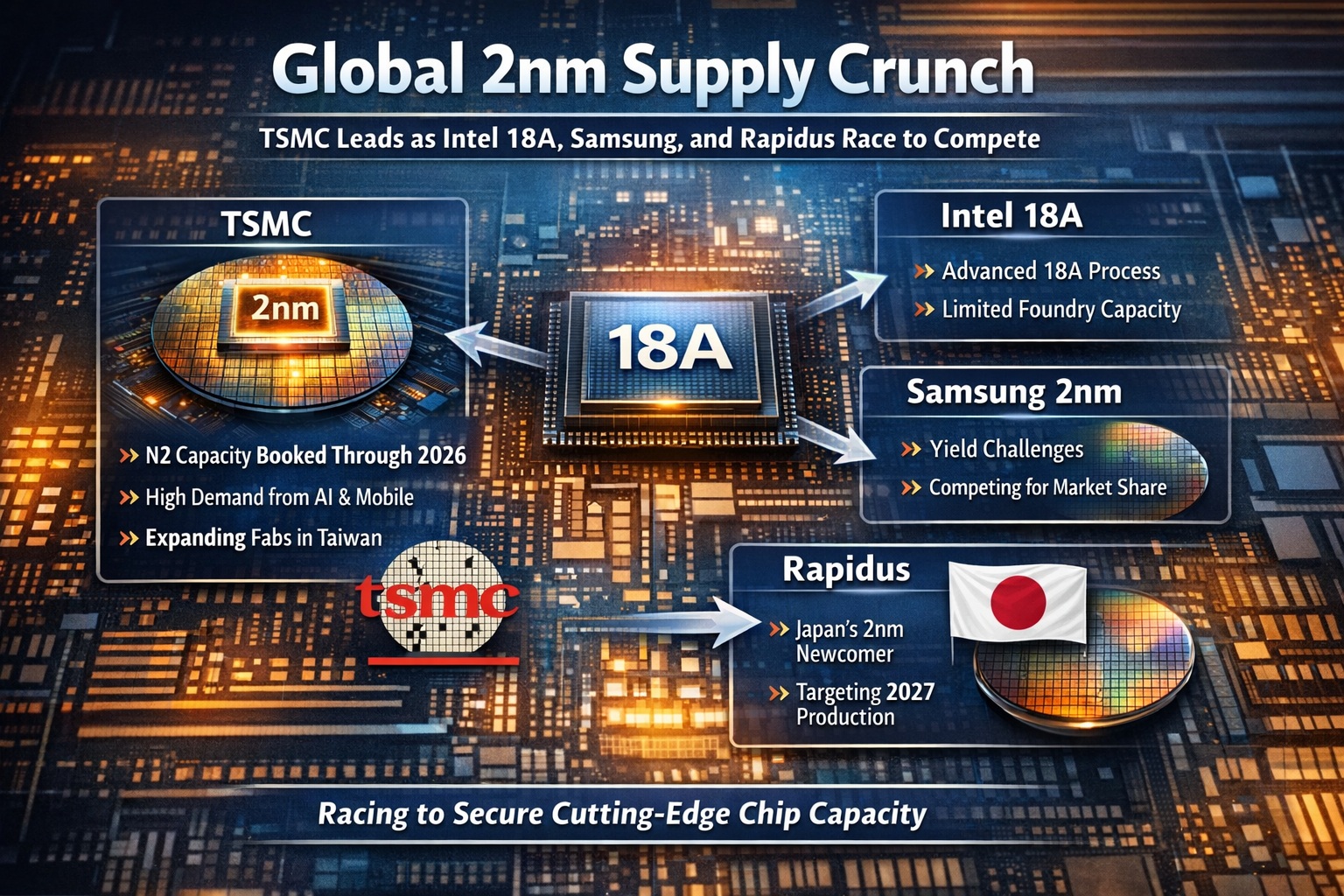

Intel 18A vs. TSMC 2nm: The Process Race Heats Up

|

| Global 2nm Supply Crunch: TSMC Leads as Intel 18A, Samsung... |

Longer term, relief could come from advanced process nodes. Intel’s 18A process has seen yields climb above 60% and is now in volume production for its own Panther Lake chips and select foundry customers, including Microsoft’s Maia 2 AI accelerators and Amazon’s custom silicon. While still trailing TSMC’s N2 (2nm) yields — which entered volume production in late 2025 with initial rates reportedly 65-80% — Intel’s PowerVia backside power delivery offers efficiency advantages that some hyperscalers find compelling for AI workloads.

TSMC remains the clear leader in capacity and ecosystem maturity, but Intel’s progress has already secured it meaningful external design wins, helping diversify supply chains away from Taiwan. The outcome of this race will determine who captures the next wave of custom AI silicon in 2027 and beyond.

Peak Pain in Q2, Gradual Easing Later in 2026

|

| The CPU Bottleneck in Agentic AI and Why Server CPUs Matter More Than Ever |

Most analysts agree the server CPU shortage will remain acute through at least the second quarter of 2026, with the tightest supply in Q2 as AI buildouts accelerate. Meaningful relief is unlikely before late 2026, and even then, only for customers with secured allocation. DRAM and HBM shortages are expected to persist into 2027.

For data center operators: Prioritize LTAs now, consider hybrid CPU-GPU architectures that optimize agentic workloads, and evaluate Intel’s 18A-based systems where power efficiency and supply diversification matter. For investors: The crunch is bullish for Intel (INTC), AMD, and Micron (MU) in the near term, but sustained pricing power depends on execution on yields and new capacity. For everyone else: Expect higher server and PC pricing to flow through the supply chain, with ripple effects on cloud instance costs and enterprise hardware budgets.

The AI hardware crunch has evolved from “just buy more GPUs” to a full-stack supply chain challenge. CPUs, once an afterthought, are cool again — but for now, they’re also painfully scarce. Stay tuned for Q2 earnings updates; they will likely set the tone for the rest of the year.

Comments